Risset Beats

- Purpose

- Some Experimentation in ChucK

- Some Experimentation in C

- The Final Product: An Improvement in C++

- Results

- Code Summary

- Conclusions

- References

Purpose

My work here is inspired by some of Jean-Claude Risset's work with beats as a form of musical expression. In this particular project, I set out to use the concept of beating frequencies to compose music. The idea is to add together many cosine waves that are very close together in frequency for each note in a song, such that at particular points in time, that note will stand out (all of the adjacent frequencies in a note will come together; for instance, a 440.0hz, 440.1hz, and 440.2hz signal will have two beats every 10 seconds, one between the 440hz and 440.1hz cosines, and one between the 440.1hz and the 440.2hz). What makes this unique is that all the program needs to do is determine the sine waves right at the beginning, and then just let them go for all time without changing anything (the system is completely time-invariant). The song should repeat infinitely, with different notes standing out chosen ahead of time.

I'm doing this because it seems like a neat way to make music; it relies solely on beats, which I expect will give rise to a unique timbre. Also, the whole thing is time-invariant (which seems cool to me since, intuitively, most songs seem hightly time-varying). The challenge will be to determine the phases for each sine wave and the number of sine waves needed such that each note will only "play" once at the correct time in a chosen interval, and so that the attack on each note will be distinct (and the notes won't just blur together all over the place).

Some Experimentation in ChucK

I started out in ChucK with sine oscillators to do some experimentation. Before I could go to far, I had to do some math:

- Let L be the length of the song in seconds, and T be the "hitting time" of a particular note

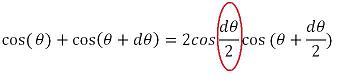

- Note that, by the trig multiplication identities,

So this means that two frequencies that are really close together (separated by dtheta), can be viewed as a frequency that's the average of the two, modulated by a sine/cosine with a frequency equal to the difference over two. This means that the envelope will reach an extrema (positive or negative) with a frequency exactly equal to the difference between the two. So, in essance, a "beat" is heard with frequency equal to the difference between the two - The first thing to do is to make sure that each note will only "occur" (stand out sharply) once per time interval. This means that the beat should reach an extrema with a period equal to the time interval (L). Therefore, using the math above combined with the fact that period = 1/frequency, the frequency separation should be 1/L for frequencies that cover a note, for that note to occur exactly once in the interval.

- The next challenge is to determine the phase needed to get the beat to occur at an exact time, T, during the interval L. Since a beat will occur at time zero for two close frequencies added together with no phase, each frequency that contributes to the beat needs to be time-shifted by T. For a sine that's defined to be sin(2PI*F*(t-T)), the phase should be -2Pi*F*T for this to happen

- The only challenge remaining is to determine how many frequencies close to a base frequency are enough to get the sharp attack needed at the chosen time. I determined this by experimentation, using the following ChucK code that I wrote, which allowed me to specify arbitrary frequencies, song length (L), time of attack of each note (T), and the number of close-together frequencies per note. I first started out with just two frequencies per note (only one beat), but found that the beat occurred way too gradually and it was difficult to pick out exactly when the note's amplitude was supposed to peaking if I didn't know ahead of time. I usually determine this experimentally on a case-by-case basis. If the tempo of the piece is faster, then more beats will be needed, since the notes need to reach a peak faster. There will also be more beats needed if the piece is longer, since the period of the beats goes up, making them more gradual (so more interference is needed at the exact point). I have a five-second example using this scheme coded into that ChucK file currently.

Some Experimentation in C

I created a program in C that takes four arguments: an input file that has a musical score, the length of the output song in seconds, the number of cosines to add together for each note, and the output wav filename.

The format of the input files was as follows:

- At the top of each file, put an integer that says how many notes will be in the piece

- Every subsequent line has "notename, length," where notename is an integer that's the offset in halfsteps from a 440 A (so, for instance, the G right below that would be -2), and <length is an integer representing the length in sixteenth notes (or some other fundamental unit of rhythm the composer wants) of each note. For example, the following input file:

will play (D E F# G first slowly as quarter notes, then D E F# G at double the speed as eighth notes).8

-7, 4

-5, 4

-3, 4

-2, 4

-7, 2

-5, 2

-3, 2

-2, 2

As usual, the program has a function that converts between halfstep offsets of a 440 A and a frequency (using the frequency multiplier of 2^(1/12) for every halfstep up), and this step needs to be done for each note. To determine the time of attack for each note (which is used to help determine the phase, as I explained before), first the total number of sixteenth notes is counted, and then the time the song user specified is divided by that number. This quotient then gives the length of a sixteenth note. The first note occurs at time zero, and the next note occurs at the (length of a sixteenth note) * (the length of the first note in sixteenth notes), and so on. With the time of attack, the phase for each frequency in a note can be calculated as -2PI*f*T, where f is the frequency of each cosine in a note and T is the time of attack.

Once all of the frequencies and phases have been determined for each note, they are added one by one to the final signal. After all frequencies have been added, the signal is rescaled to prevent clipping

Here is the code I wrote to do all of this

The Final Product: An Improvement in C++

Although the previous solution in C completed the task correctly, it ended up being extremely inefficient. As I mentioned, the user specifies how many cosines should be added together for each note. So literally, the number of frequencies needed would be (the number of notes) * (the number of frequencies for each note). This means that if it takes roughly a second to fill my 66.6 second musical statement (160 notes) with 4 cosines, and I used 500 cosines per note, then it will take (160 notes)*(500 cosines / note) / (4 cosines / sec) * (1 minute / 60 sec) * (1 hour / 60 minutes) = 5.56 hours(!) to calculate.I realized that if I want n cosines per note, then I should really only have to use n cosines for every distinct note played in the piece. This is because the sum of two cosines of the same frequency will also be a cosine of the frequency, just at a different amplitude and phase. For instance, if a piece has the structure (F# F# G A A G F# E D D E F# F# E E), there are only 5 distinct notes there (D E F# G A), so I should only need to have the (number of cosines per note) * (5 distinct notes) cosines for the whole piece, as opposed to (number of cosines per note) * (15 total notes) cosines. For really long pieces, the compression will be even higher, since it's likely that each note will be used dozens of times over.

I was able to merge the same notes at different times together by using phasors. To accomplish this, I switched over to C++ so that I could use an STL map as a table for my phasors. That is, I created a map with keys as a frequency, and the values as an object phasor. This way, as I was going through all of the frequencies from note to note, I could check to make sure that the frequency hadn't already been used, and if it had, I would simply add a phasor to it. Another improvement I made was to have the "close by" frequencies for each note spaced evenly on both sides of the note. Before, I simply added a factor of 1/songlength to get each successive frequency

Here is the C++ code I ended up with after these modifications

Results

Here are some example executions of my program with different scores and different parameters, including my eventual "66.6 second musical statement."Click here for all result files

| G Arpeggio (10 sec) | arpeggio.txt | beatsynth arpeggio.txt 10.0 100 arpeggio.wav > arpeggio.freq | arpeggio.wav | 700 | arpeggio.freq |

| G Arpeggio (5 sec) | arpeggio.txt | beatsynth arpeggio.txt 5.0 100 arpeggio5.wav > arpeggio5.freq | arpeggio5.wav | 700 | arpeggio5.freq |

| G Arpeggio (2.5 sec) | arpeggio.txt | beatsynth arpeggio2.5.txt 2.5 100 arpeggio.wav > arpeggio2.5.freq | arpeggio2.5.wav | 700 | arpeggio2.5.freq |

| Happy Birthday (10 sec) | birthday.txt | beatsynth birthday.txt 10.0 60 birthday.wav > birthday.freq | birthday.wav | 480 | birthday.freq |

| "Wanna Be Startin Something" Michael Jackson My 66.6 second musical statement | wanna.txt | beatsynth wanna.txt 66.6 500 wanna.wav > wanna.freq | wanna.wav | 6000 | wanna.freq |

Note that I needed far more cosines per note in the last example, because it was 66.6 seconds long. This means that the adjacent beat frequencies I produced were very slow (since I only wanted them to occur once every 66.6 seconds). Since the beat frequencies were slower, they reached a peak much more gradually. This means that I needed to add much more of them together to get the sharp attack needed.

Code Summary

Click here for all code| rissetbeats.ck | To test out basic concepts before moving onto more complicated code |

| rissetbeats.c | A first (inefficient) implementation of the final product, to test synthesizing output based on a musical score |

| (*)rissetbeats.cpp | A refined version of the program that merges identical frequencies into phasors for more efficient processing |

Conclusions

This design met the specifications for using beating cosine waves to have notes of precise frequency "pop out" at precise times during a song. What makes this method of composition so neat is that technically, all notes are being played all the time throughout the entire song; but as each note goes to be played, it pops out of the background at just the right time. This makes for a really cool, almost underwater effect. Because the attacks of the notes are not infinitely precise (it takes some time for the beats to rise to a peak), the music does sound rather blurry. Also, because all notes are in the background simultaneously, the music has an etheral, other-worldly timbre to it. What makes that even cooler is, as the note gets close to peaking, the listener can sort of anticipate it, because other beats that are created nearby begin to increase in amplitude (this is especially obvious when the piece is about to jump way up in pitch).

Since this was merely a demonstration of a concept, there are lots of extensions that could be made if this were to be refined as an actual composition tool. One of the first things I would do would be to extend the program to play chords. Nothing special should have to be done, other than allowing two+ notes to play at the same time (all of the math should be the same). Also, I came up with my own specification for creating musical scores. But it would be more natural to allow my program to read midi files, so that, in effect, my program turns into a midi synthesizer (especially with the note-on commands, it should be easy to figure out exactly when the attacks of each note are). In terms of perceptual quality, perhaps I would add the capability for users to specify harmonic patterns for each note, so that they could sound richer and perhaps even sound like actual instruments in a weird way. Lastly, I would create a basic pitch detection program that goes through (using the FFT, presumably) an input sound file and looks for 4-5 peaks in frequency every small increment in time, and that I could feed that information to my beat synthesizer (to resynthesize the most important notes from the input sound as beats).

References

C++ STL reference

Perry Cook's waveio.h

blog comments powered by Disqus